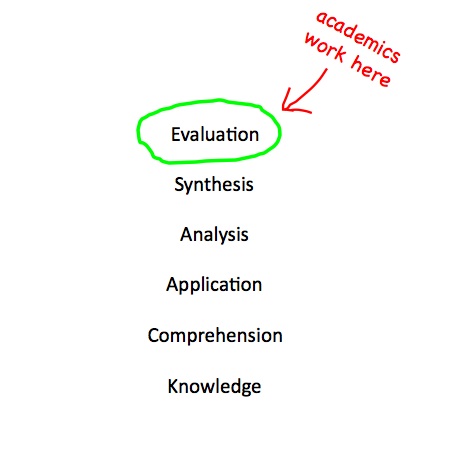

Bloom’s Taxonomy of learning, first proposed by psychologist Benjamin Bloom in 1951, revolutionized the science of education by allowing the cognitive level at which students and teachers work to be classified on a simple scale. Professional academics, for example, regard any work that does not reach the sixth and highest level, evaluation, as derivative. A proper work of Evaluation requires one not only to understand the fundamentals of a given topic, but also to weigh the competing perspectives of other scholars before reaching a coherent and original conclusion.

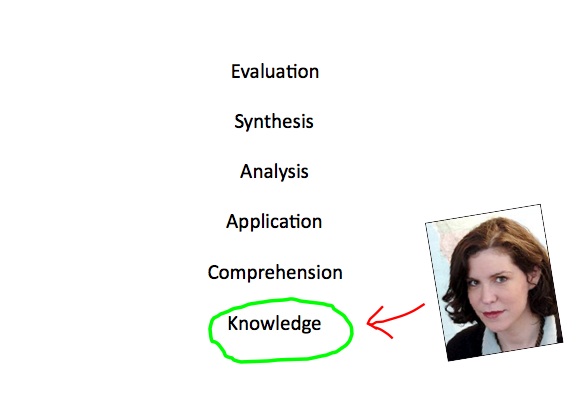

On a rare occasion when Megan McArdle bothered to ground her suppositions in fact, and therefore performed what a professional would call ‘learning’, McMegan arguably reached level one. McMegan correctly summarized the argument of one relatively dated theoretical report on healthcare spending and innovation, without noting that numerous equally qualified professionals disagree. McMegan also did not note that the same authors later tested their model in the real world and concluded that their earlier study was wrong [correction – cannot fully explain what happens in the real world].

One can also reach Bloom’s first level by opening the newspaper and reading a paragraph at random. Reading two paragraphs in order, you will probably pick up context and reach level two. Middle schoolers who hope to earn an ‘A’ grade typically reach level 3, Application, on a regular basis. Glibly making crap up, on the other hand, generally won’t net you better than a gentleman’s ‘D’.

***Update***

Note the correction. Also, below the fold, I have reprinted with permission a summary that Tom sent me by email last night.

Megan McArdle’s response to being caught in a bit of make believe is to assert her commitment to analytical rigor — an claim she defends by pointing to her mastery of the academic literature. In an example focusing on the question of whether or not a reduction in Big Pharma revenue will lead to a loss of life expectancy, she uses the rhetorical trick of both claiming and appealing to academic authority to suggest that advocating cost controls in health reform is tantamount to knocking grandma on the head.

Unfortunately, she gets the argument wrong, and she does so in a very suggestive way. She understands the form of academic discourse –citation of prior work is part of both the labor and rhetoric of scientific communication. But she misses the actual point, which is that you have to check what people say; you can’t just take reported results on faith. This is especially true for those who are not themselves within the discipline being cited; real experts develop all kinds of short cuts to get to the point of new work (though they can certainly get tripped up too), but those of us who want to use such work to inform what we write for broad consumption have to put some effort into figuring out what is going on.

And what I spend way to many words doing is showing several of the different ways in which McArdle failed to do so. She didn’t detect — or perhaps she didn’t care about — the obvious conflict of interest problem in the core piece of research she cites. She failed to notice what was missing in that paper, all the methods and assumptions and cautions about limits to work making a very large claim — all those sections that a careful reader of the literature would have known were signifiers of serious work, and whose absence suggests the reverse. She does not appear to have noticed, or at least questioned the degree to which the conclusions turn on assumptions not in evidence, or not rigorously defended (I’m thinking here of a very fraught claim on the relationship between drug company innovation as measured in drug approvals and longevity. I didn’t go into this in an already too long post — but the connection and assertions of very specific amounts of life lost turn on essentially one researcher’s work, results that are not by any stretch taken as common wisdom at this point, and for a lot of good reasons.)

She didn’t ask, that is…she never seems to have done what any honest journalist, and any good scientist would do as a matter of routine: think, just for a moment, “does this make sense? What could be wrong here.”

If she had, she would have tumbled to the deeper and more important failure she committed here. In her attempt to demonstrate her morally superior attention to the actual research base, she cites a second paper that does not, in fact, say what she thinks it does. She either didn’t actually read it, or she didn’t understand it when she did…and then she committed the one true sin of any journalist: she didn’t pose the question to someone who could have straightened her out.

C Nelson Reilly

You forgot to post your +7 at the end there

TenguPhule

Sadly, even simplified, this post is still above Megan MilkCurdle’s level of understanding.

Paul

Megan is a self-righteous ideologue with a mere handful of regular readers. Please, please, please ignore her and let her blog die a natural, ignoble death.

Martin

Evaluation is hard. Pundits, Republicans, Libertarians don’t do hard. In their world, simple, clean, easy is fundamentally better. If you have to go past ‘comprehension’, you’re doing it wrong in their world.

Warren Terra

Y’know, we don’t see nearly enough of the “excellent links” tag. It would be a good tag to see more often (with, of course, links that justify its application)

Anne Laurie

You do realize, Tim, that you’ve just given the resident spooftroll another number-mantra to add to his favorite schtick? Bloom’s Taxonomy will soon be defiled in wordsalad postings as the Six Educations, right between the Five Senses and the Seven Liberal Arts, or whatever this week’s drone is counting…

argystokes

Actually, being able to summarize is level 2 (comprehension).

John Cole

I just like that I fell asleep on the couch, woke up to go to the bed, and in between found that someone brutalized McSuderman. My life somehow feels more full.

Fulcanelli

Such a scholarly and sophisticated post, Tim. I feel humbled by my crude and admittedly feeble contribution to this thread wherein I admit the best I can come up with is that I’d like to slap that trust fund smirk off her face and take her lunch money.

Well done.

Jason Bylinowski

Levenson is always goofing about how long-winded he is, but really, that 4,000 words pretty much flew by pretty easily. Long-winded or not, that’s an accomplishment, as I typically don’t read long-form on the internet.

Judging by the fact that this isn’t the first (or even the third) time I’ve been by his site to read his criticisms of McArdle, I’d guess that he does seem to have a little bizzaro crush on her. Anyway, it was a great takedown. Do you guys ever just sit there after having read something so awesome, and just imagine the look on the face of the victim of the piece when they finally manage the courage to read it? Man, to see McAddled read this stuff…..the tension would be so think you could eat it with a spork.

Yutsano

@John Cole: Did Tunch massage you whilst you were napping?

Calouste

@Jason Bylinowski:

Uh, no. Because to have that effect on McArdle, she would have to be able to get to level 2, Comprehension.

Warren Terra

It’s perhaps fitting that she’s marrying an astroturf specialist given that much of Levenson’s post is about how the single study she hung her argument on may be the research equivalent of astroturf.

Joel

Tim’s summary of Tom’s argument isn’t correct.

The paper Tim is referencing (http://economics.uchicago.edu/download/innovationmarketsizesep22.pdf) had a theoretical component, but an empirical one as well, as Tom notes.

The later paper (http://econ-www.mit.edu/files/4473) doesn’t find that the earlier one is “incorrect.” Tom doesn’t present a good summary of it. The conclusion is

That said, Amy Finkelstein (one of the co-authors of this second study) suggests funding basic research and prizes as the best ways to increase innovation (http://voices.washingtonpost.com/ezra-klein/2009/08/in_defense_of_experts.html). As Ezra highlights, arguments about stifling innovation are not generally being made in good faith.(http://voices.washingtonpost.com/ezra-klein/2009/09/ignoring_innovation.html)

Matt

As a teacher, the fact that there is a post on Bloom’s Taxonomy is crazy to me..you can’t just be giving away all of our academic code-speak away! CRAZY I SAY! (head explodes)

Ruckus

@Martin:

Are you saying that the easy button was, dare I say it, invented by a rethug? Or is it just the concept of a large button that one can press to make everything happen without any thought, or actual work, suits the rethugs intelligence and work ethic?

FMguru

McCardle doesn’t think, she knows.

It’s one of the most seductive aspects of Libertarianism. You don’t have to sift through actual facts and conflicting data and messy empirical studies. All you have to do is adopt a handful of tenets (the government always ruins everything, the free market always knows best, greed is a social good, etc.) and just derive all the facts of the universe from them (dear God, the primary expression of their philosophy is called “Objectivism,” as if it had been rigorously empirically proved and couldn’t be argued against). It’s a nice shortcut that avoids any actual thinking and leaves lots for time for patting yourself on the back for your cleverness and edgy refusal to be like the rest of the sheeple.

Of course, it’s also complete bullshit, which is why most people outgrow it once they get out of their angry teenagehood. Not our Megan, though! She knows that private industry is one million times more efficient at saving lives than the stupid wasteful government, so her “research” is just googling for academic-seeming links that agree with her. She has no interest in digging into the issue from all sides, or considering her sources, because she already knows the answer, and so why waste her time with all that?

This is why reading her is so amusing at times. Her beliefs are based entirely on a handful of stubborn prejudices, so when she gets called on them or contradicted by the facts, it sets off a tizzy of confusing restatements, goalpost-shifting, declarations that the matter is “closed”, backtracking (“well, that number I based my entire argument on was obviously hypothetical”), blame-shifting, and so on – all made better because she never drops her pose of informed, world-weary sage who understands how the economy really works.

Batocchio

Interesting post – and I’m off to read that series now.

The thing is, as Ezra Klein basically said earlier this year, McMegan is not interested in problem-solving. Per the chart above, she’s not interested in comprehension or anything higher, and often not even knowledge. She can do a bit of name-dropping, but she is not bright, nor honest, nor thoughtful, nor thorough, nor coherent, nor a good writer, nor a kind human being. She reminds me of the Bush gang she supported as they pitched for war – flinging whatever shit they could grasp, as fast they can, hoping something will stick, and that no one will call them out. They’re hacks and zealots, not wonks, and honest, rational discussion just doesn’t come into it for them. They know what they want, they have their privilege, and they’re only try to justify it and sell it.

If Megan McArdle had any talent, she’d be Betsy McCaughey. On the Taxonomy of Sith, McMegan merely achieves “vile, self-absorbed twit” rather than “evil and influential.”

geg6

Sighs. I need a cigarette after that. Thank you, Tom Levenson. And thank you, Tim F. You guys sure know how to show a girl a good time.

slightly_peeved

Well, I’d say a fair proportion of people don’t; it’s just most of them are referred to as creationists, fundamentalists, etc.

A sizable portion of the population want a rigid ideology as a substitute for actually thinking about things. Objectivism caters for the ones that want to be able to keep their rigid doctrine while still being able to sneer at the religious and the political left.

Tom Levenson

@Joel: Joel,

Thanks for the comment. You are right of course: the ALCF study does not say (and nor do I, pace other summaries) that the AL is wrong. Rather, as I confirmed by talking to some of the folks closer to the study than I, the later work is a confounding finding.

That is: my point was that while the second piece of work certainly doesn’t prove that innovation is uncorrelated with shifts in revenue or expanded sources of funding. But it does suggests that the connection between funding and innovation is ill-understood…which is exactly the point, as I read it, of the conclusion you state.

Remember — my goal in this series was not to produce a solid piece of journalism on innovation in drug discovery and applied R and D. Rather, it was to show just by beginning the exercise, the difference between serious attempts to makes sense of a complicated problem…and the approach the McArdles of the world pursue.

And — Ezra is IMHO exactly right. If I had read his few hundred words, before launching into the above, I might have saved myself a few thousand. ;)

The Bearded Blogger

@Paul: please, please, please, ridicule her and make visible the crazy unravelling and utter intellectual bankruptcy of the right… the visible, pathetic, unignorable signs of the right’s fall must at all times be presented, mocked and scorned…

if only because it is greeat fun!

@Martin: a kind of solipsistic fallacy wherein, if I don’t get it, it’s probably false

@Jason Bylinowski: That is, assuming she understands the criticism, or is capable of reflective, self-critical thought

@Ruckus: If only we could FIND that button… damn liberals are probably hiding it… used it to elect that negro socialist…

@FMguru: there is a joke about economists that seems fitting here: they’ll ask “that may be true in practice but… is it true in theory?”

El Cid

I’m so glad our national media feels the need to promote lazy, dishonest, shallow ideologues to positions of national media exposure, so that they can defend and advance the arguments of the venal pro-super-rich and any war they can imagine.

I’ll remember the next time I forget not to listen to “Marketplace” and they turn to “the Economy” and the views of Megan McAddled.

I’ll remember the next time I nearly vomit because someone discusses people like David Brooks as real thinkers, because, you know, as long as you’re vaguely less screamingly idiotic than the next right winger, you’re some sort of serious intelskual.

The Bearded Blogger

@El Cid:

Bill Krystol is a serious intelskual. Whatever THAT means, because an intellectual he ain’t.

Think of how much better the world would be if all the time given on teevee to Bill Krystol was given to Billy Crystal instead, opining on the same topics. In a similar train of thought, I think Michael Bolton would have made a much better UN ambassador than John

Michael D.

We use a simplified version of Bloom’s (sort of) where we look at training and say, “Where do we want our students to be when they’ve completed this course?”

Awareness

Knowledge

Skill

Mastery

When I think of Megan, and most of the “intellectual (*cough choke*) right, I see them at barely an Awareness level of AKSM when presented with a topic. For example:

“I’m Aware there’s something going on with healthcare in this country, but I have no Knowledge of any of the details, nor do I have the mental Skill to Master the topic.”

or:

“I’m Aware that we have a president and I have Knowledge that he is a Skilled negro. Why does he not have a Master?”

The Bearded Blogger

@Michael D.:

When I first read that, I thought you were referring to a master’s degree. How naive am I?

fuddmain

The Car Talk slogan fits McArsle perfectly:

Non Impediti Ratione Cogitationis Conloquium Currus

(Unencumbered by the thought process)

Zach

I posted this in one of McArdle’s many threads on this:

Her response: “Because it makes us better off. It’s a pity that other countries free ride, but those are the choices we have.”

I believe that since then (all of two months ago), she’s shifted from saying it’s reasonable to subsidize the world’s drug market to saying that incredible hazard lies in any attempt to pay less for drugs. Of course, we’re talking about a public plan of 10 million people or so… when we already have negotiated pricing for much larger pools between Medicare, Medicaid, and the VHA.

SenyorDave

I always though the entire liberterian creed was summarized by St. Ronnie:

The nine most terrifying words in the English language are, ‘I’m from the government and I’m here to help.’

Of course that doesn’t apply to liberterians in the south when a hurricane hit, or liberterians in the midwest when a tronado hit, or liberterians on Medicare, or liberterians who bough a house with an gov’t loan program, or liberterians who attended a state college, or liberterains who hate gov’t until they need it, then love it while they use it, and immediately go back to hating it.

Whenever I read one of these shitbags screaming about how private industry is so wonderful I think we should have a system where they sign a waiver that they will not use anything the government has had a hand in, and then they can not pay taxes.

Let them drive on their private roads, send their kids to private schools that take absolutely no gov’t money, etc.

If that removes Galt-wannabees like McArdle from society, and stops the incessant whine about how great things would be if teh gov’t would go away, its worth the loss of tax revenue.

Svensker

@John Cole:

You found this out while you were dreaming? Sweet dreams!

slightly_peeved

I wonder if McArdle is aware that the US government already subsidises medical research by, well, subsidizing medical research. The NIH subsidises a large amount of research into cancer, and the one area where the US does well compared to other nations is certain cancer survival rates.

Punchy

Who the fuck is Megan McArdle?

inthewoods

I had a great moment the other day – I was listening to Marketplace on NPR, which for some reason brings Megan on to comment on the economy and anything else they throw at her. They asked her about the healthcare bill and she stated that liberals were dreaming if they thought it would pass. She also made a silly argument about how it would destroy the insurance industry.

But given that she’s wrong about just about everything – and now that it looks more likely that something will pass – I just know to take whatever Megan says and reverse it to get an accurate picture.

Of course, why Marketplace, a program I rather like, continues to have her on the show is just beyond me.

Michael D.

@SenyorDave:

Of course, this is all in addition to the fact that the more Republican (red) states are the beneficiaries of FAR more government dollars than their Northern (blue) neighbors.

For every tax dollar they pay, red states receive a disproportionate amount back in Federal Redistribution.

There’s a name for that type of redistribution of wealth, but it escapes me right now.

Morbo

@Tom Levenson: I was interrupted half way through part 4 last night, so I didn’t get the payoff ’til this morning. Excellent takedown of what passes for serious thought around Glibertaria, not that this method of passing along uncritical facts is unique to them. There is a preponderance as you say of journalists who fail spectacularly at science reporting in pursuit of the most salacious headline. McArdle’s laziness is not unique at all. I think it’s fair to say though that she’s in a special place when it comes to mendacity.

Bhall35

Boy, this was a good way to start my day.

Zifnab

@Michael D.:

And yet, she’s got column space, a salary, and credibility. Amazing how these things have become completely disjointed.

They said if you gave 1000 monkeys 1000 typewriters for a 1000 years, they might finally produce the combined works of Shakespear, but I’m pretty sure you could get a McArdle column with 10 monkeys, 2 typewriters, and a weekend, so long as the monkeys were getting kick backs from the insurance industry.

SenyorDave

The laziness aspect is something that interests me. It seems like a significant number of prominent conservative politicians are lazy, and almost trumpet their laziness as a virtue.

For example, John McCain on his knowledge of economics:

McCain said … “The issue of economics is something that I’ve really never understood as well as I should. I understand the basics, the fundamentals, the vision, all that kind of stuff,” he said. “But I would like to have someone I’m close to that really is a good strong economist. As long as Alan Greenspan is around I would certainly use him for advice and counsel.”

How in God’s name could this man have been the presidential nominee?

Then there’s Bush who spent a significant portion of his presidency on vacation. Reagan, who had his advisors running the country while he slept.

Palin actually is the perfect Republican for today, although in her defense in addition to being lazy she is actually not too bright. But as one of the Republican legislators in Alaska said, “she loved being governor, who just didn’t like having to govern”. Because then she had to actually work!

On the other hand, the WSJ publishes editorials about Obama being to interested in policy. God forbid we should have an educated, hard-working president. After all, Bush showed us what 8 years of mailing in the job does to a country.

Zifnab

@Michael D.:

And the real kicker is when you check where all that tax money is going. They’ll drop twenty or thirty million restoring the Alabama coast, beautify the Mobile suburbs, then flip the entire north side of the state the bird when some minority needs school renovations or hurricane relief.

I mean, there’s a certain economy of scale. Build a highway in New York, and you’re servicing five or six million people. Build it in Wyoming, and you’re servicing maybe 5% of that. But you can’t just not build anything in Wyoming.

Then you look at the yuks in Arizona refinancing their freak’n state capital for 20 years to cover a one year deficit, because that’s more ideologically acceptable than a 4% sales tax increase.

Red States are these giant sucking pits of waste and fraud. It’s not even like the extra tax money we spend is going towards a good cause.

wrb

A delightful takedown and not too long at all.

Davis X. Machina

Bloom’s taxonomy is in itself derivative.

Begins with recounting someone else’s fable (knowledge), ends with forensic attack and defense of proposed legislation (evaluation).

The Greeks generally did it first…and it often hasn’t been done better since.

1. Often hijacked by, and claimed to be the basis of curricula in, modern Christian — or ‘Christian — academies. Do not confuse these with the real thing.

Davis X. Machina

Well, the

tag doesn’t work…Wag

The act that created Medicare Part D specificly PROHIBITS the govt from negotiating lower prices for drugs for Medicare. Medicaid and the VA do have the ability to negotiate.

aimai

Battochio pretty much nails it with this:

But, of course, everyone else is right too. Its cold comfort, I know, but when you have fully grasped that Megan has the intellectual and moral wattage of a creeping form of foot fungus you also grasp, to the full, that there is…how shall I put it?… a moral, political, and intellectual imbalance between our side and theirs. If the far right/corporatist stooges had the slightest pretense to an argument they simply wouldn’t have to pay Megan to pretend to think for them in public. Sure, they go to war with the public intellectuals they’ve got, not the ones they want. But the fact of the matter is that an infinite number of Megan Mcarglebargles, typing an infinite number of atlantic columns still doesn’t produce anything remotely resembling an honest, thoughtful, moral, historically relevant, or even factually correct argument in defense of libertarian/rightist/corporatist policy. And someone could be making those arguments, you know? Somewhere in this vast, whirling, globe you could find someone who might make some of those arguments honestly and thoughtfully. We have tons of people on our side making honest, thougtful, arguments in defense of our policies every day. But somehow they have to resort to Megan? In the end I have to suspect that is because the last honest conservative thinker (Arthur Silber comes to mind?) left the building because he couldn’t square conservative, or libertarianism, or even thought with what is going on right now on the right wing.

aimai

Ken

Yay Bloom’s taxonomy! Personally, I use Perry’s scheme more to evaluate students’ intellectual development and design exams… but Bloom works too!

Wag

My first lecture in medical school was an eye-opener. Dr Herzog (no doubt a liberal academic type) declared that “Half of everything that you are about to learn in the next four years is wrong. We don’t know which half it is, so you have to learn it all. It is, however, incumbent upon you to keep an open mind, and to move past the thinkgs that you learn that are proven incorrect.”

During my career, there have been many dogmas that have been abandoned, which in theory were going to promote health, but when subjected to rigorous clinical trials proved to kill patients rather than help. Hormone replacement therapy (HRT) for women is a great example. It can help to treat the symptoms of menopause, but that is it’s only value. Contrary to our previous theory, estrogen does not prevent heart disease or Alzheimer’s disease, it instead INCREASES the risk of those diseases. It took a well designed clinical trial to break the momentum of HRT, to knock down the orthodoxy which had developed around the theory.

In many respects, we need to look at the various health care systems around the world as an on-going clinical trial in which patients are randomized (through luck of residency) to different systems. Our system has been proven to cost more and to have overall inferior outcomes compared to other systems, yet the GOP clings to the “knowlege” that our present system must be superior because the theory says it should be. The right will cite “confounding” based on more homogenous populations in some countries (Canada), but will ignore the countervaling example of a country like England, which has a very racially diverse population. The right will claim that we cannot compare our health system with the rest of the world’s because you would be comparing apples to oranges. I disagree. Health systems are all apples. We are comparing our system, which is like a sour crab apple to the rest of the developed world, where they eat a variety of apples; Golden Delicious in Canada, Pink Lady in France, Harlson in England, you get the point. Rather than claiming that our sour apple is the best, we should be open trying a new breed.

Brick Oven Bill

This is an interesting post. Knowledge, as I see it, is information gathered from our five senses (sight, hearing, touch, taste, smell). Comprehension would be its own biochemical thing, rooted in evolution. The next four would be an application of the seven Liberal Arts (application, analysis, synthesis, evaluation).

And I agree with the statement that Meghan mostly is limited to the level of sensory perception, and academics operate at the level of evaluation. But Luke, evaluation does not prepare you for Darth.

In order to operate at the level of an ‘effective executive’, striving to become a ‘leader’, one must re-apply his five senses to evaluation. In this manner a twenty-two year old 2nd Lieutenant in the infantry has the opportunity to become a Captain. One hopes that 46 years of age is not too old to re-apply the five senses.

slag

I’m still stuck on the part where McArdle grounded her supposition in fact. Inconceivable!

Koz

Get a life. McArdle is just about the only thing worth reading in The Atlantic.

Napoleon

@Zach:

Great post you put on her site. IMO there is nothing that highlights the idiocy of their position more then what you point out. The free market can’t go wrong in their world until you are talking about our government paying supra-market rates for drugs, then in that event we can’t trust the free market to normalize what each countries pay for drugs so that the drug companies still make their profits.

That amounts to totally inconsistent positions that reveal them for exactly what they are. Apologist for the rich and business interest.

Seanly

y’all are being too nice to Megan. She’s not mis-reading or even uninterested in knowledge or comprehension. She is a stupid ideologue hack. Like many conservative and libertarian bloggers, she’s a blowhard in inverse proportion to her lack of real understanding of economics, human nature, etc. etc.

What aimai sez. Also, too.

fixted

burnspbesq

I am not clear as to why we are revisiting the subject of McArdle. Is there new information that casts doubt on the long-standing consensus that she is biased, intellectually dishonest, and generally unworthy of being taken seriously?

slag

@Napoleon:

?

Ben Richards

@FMguru: Now THAT is a take down. I may have to cut and paste it for future reference. Well done!

slag

@slag: Not Napolean. I clicked the wrong stupid arrow. And I was too dazzled by the stunning rhetorical adroitness displayed by @Koz to notice.

Napoleon

@Wag:

That is similar to a talk my favorite history professor (my major) in undergraduate would make at the very beginning of each of his classes (and by the way I know he was a liberal since he headed the local ACLU and his wife was a civil rights attorney). Basically it was “ultimately you are not here to learn facts but to learn how to think for yourself so that you can ascertain the facts yourself for the rest of your life”.

Napoleon

@slag:

At first I thought “did I say that?!”

Brick Oven Bill

“One can also reach Bloom’s first level (knowledge) by opening the newspaper and reading a paragraph at random. Reading two paragraphs in order, you will probably pick up context and reach level two.”

This I strongly disagree with. The newspaper might read:

“The apple in the box is green.”

But you would not know that the apple in the box is green from reading the newspaper. In order to know that the apple in the box is green, you would have to open the box and look at the apple.

Brick Oven Bill

Here, #58 is explained in great and lasting detail.

Notorious P.A.T.

Megan strikes me as a social promotion all the way.

Zach

@Wag: I was overgeneralizing, but that’s quite right.

I love that libertarians and conservatives are arm in arm warning about the moral hazard of eliminating government waste.

Edited because I noticed that edit’s back! Also, note the incongruity between complaining about the inefficiency of stimulus spending and defending the inefficiency of drug spending.

Notorious P.A.T.

As if believing in something that’s completely unfounded just because you want it to be true isn’t the very height of religion.

slag

@El Cid:

Or Newt Gingrich. NPR went to crazy town at some point in the last 5 years or so and they rarely look back. They’ve lost all my financial and volunteer support, and the only program I’ll regularly listen to now is This American Life.

DZ

@Napoleon: Good professor. My father phrased it differently, but he told us early on that the primary purpose of education was to learn to read, write and speak effectively and to think critically. Everything else was just data points that we could learn when we needed to do so.

Emily

@Koz:

If you think McArdle is worth reading for anything other than a horrified chuckle that her dreadful writing passes for professional-grade quality, you’re dumber than she is. She’s a snobbish, pretentious, immodest, and incurious hack all-too-convinced of her imaginary smarts.

Tim F.

@Brick Oven Bill: A very good point. I think that education experts generally classify ‘knowledge’ as a datum that you pick up from whatever source, even thirdhand. If a person brings critical thinking to the table and starts filtering for reliability, then he or she has already stepped up a couple of Bloom levels.

Wag

Well said! Can I steal this quote to use against my brother-in -law?

kay

Health care reform debate has had the unintended consequence of really highlighting the difference between good solid work on presenting complex information and ideas and total pulled-out-of-the-ass crap.

There is more meat in one Ezra Klein column than in the total collected works of the conservative and main stream punditry on health care, since May. This is an issue you can’t fake. It’s not like foreign policy musing, or vague speculation on free market theory. Everyone uses the health care system. Everyone has direct knowledge and an opinion.

So many failed the test. Andrew Sullivan failed health care reform 16 years ago, and continues to fail, daily, this person failed, the Washington Post health care reporter Ceci Connelly failed, all of cable news failed, the list goes on and on.

It calls all the other work into question. They were given a really difficult test on a Big Issue, and most of them flunked.

Sloegin

TL:DR.

Complex analytical proposition that said commenter is dumb. Much easier proposal is that same is a mendacious bought hack.

WereBear

@slightly_peeved: Not only that:

It’s also a question of responsibility.

Strange as it may seem, there’s a lot of people who do not risk making their own decisions, because they would have to own them if they were wrong.

They truly prefer things to be screwed up… as long as it’s not their fault.

geg6

@kay:

Meanwhile, as people argue about how truly stupid McMeghan is (common grade glibertarian stupid or truly 100% Rand-level stupid), the Dems are going to work. I have now read three articles on a proposal that seems to me (and, apparently, Sam Stein, JMM, and Ezra) to be (as Sam puts it) the “silver bullet:”

http://www.huffingtonpost.com/2009/10/07/dems-discussing-public-op_n_313054.html

On first glance, I like it. I don’t love our red state friends getting screwed on this (and that is surely what will happen in the short term), but it has a real chance of passing and will let the wingnuts make their stand on the backs of their constituents. Who may actually throw the bums out once they see how badly they will be getting screwed.

Tax Analyst

WereBear said:

“Strange as it may seem, there’s a lot of people who do not risk making their own decisions, because they would have to own them if they were wrong.

They truly prefer things to be screwed up… as long as it’s not their fault.”

Yes, “The Innocent Bystander Defense”.

“Don’t blame me, I didn’t do nuthin.”

But “doing nuthin” ends up allowing folks like the Bush-shites to run our affairs like a venal version of the Three Stooges. To be uninvolved when the country is being run by mendacious morons is no virtue in my book. Of course, now that we have a thoughtful and deliberative President who seems to want to actually better the lives of the general population we have the spectacle of seeing folks that Obama is actually fighting FOR pissing into their boots about how awful that is.

These folks were satisfied with Bush & Co. because Dubyah and his Stoogistas presented all their various bullshit as though they were Undeniable Truths. Note that no matter how outrageous or wrong-headed the lie they would never back down, admit fault, accept blame, or (gasp) apologize.

dr. bloor

@Warren Terra:

It’s perhaps fitting that she’s marrying an astroturf specialist given that much of Levenson’s post is about how the single study she hung her argument on may be the research equivalent of astroturf.

Remind you of anyone?:

Alvy Singer: Here, you look like a very happy couple, um, are you?

Female street stranger: Yeah.

Alvy Singer: Yeah? So, so, how do you account for it?

Female street stranger: Uh, I’m very shallow and empty and I have no ideas and nothing interesting to say.

Male street stranger: And I’m exactly the same way.

slippy

@Napoleon:

This is why conservatives fear college educations and intellectuals. What they want is for learning institutions to dispense facts as if they were a product. The facts that are convenient for them, preferably. And they really don’t get the whole critical thinking part which is why they look at both sides of an argument and decide that one of them is right based on how they feel about it, or why they feel that facts such as evolution and astrophysics need fiction such as the Bible for balance, because truth is just a marketing campaign, and whoever has the most compelling campaign should win.

Thus, conservatives have a virulent disdain for intellectuals, and people who know what the fuck’s going on often don’t know how to handle conservatives because it sounds like they’re not only wrong, they’re fucking crazy.

ericblair

@Napoleon: Basically it was “ultimately you are not here to learn facts but to learn how to think for yourself so that you can ascertain the facts yourself for the rest of your life”.

Teach a man a fact, and he’s smart for a day. Teach a man to reason, and he’s a pain in the ass to his social betters for the rest of his life.

Crusty Dem

All this talk of drug company innovation extending life span is absurd. With a tiny handful of exceptions, this is barely a goal of drug company research, which really has a single goal: get a drug past stage III of clinical trials so it can be handed off to the massive marketing monstosity that is the core of drug companies in the US.

When R&D isn’t dwarfed by marketing, I’ll consider whether drug companies could be beneficial. Until then, they and their glibertarian supporters can fuck off.

trollhattan

@Anne Laurie #6 meet bOb #47. Anne Laurie FTW, which puts her leagues ahead of McMegan in the realm of adaptive learning.

Is our McMegans learnin’? Nadachance.

Fulcanelli

@Notorious P.A.T.: A “Social Promotion?” Is that what they’re calling it these days?

I am soooo out of the loop these days being a boot-strapping, self-employed liberal.

Xanthippas

Well, I went to the “longer” Levenson (that is, I read all four of those posts) and yes, he destroys McArdle. I think his conclusions sums up why it’s necessary to counter these fact-free bozos as well as anything I’ve read elsewhere:

In my mind, the health care “debate” is a summary of the worst of this. On one side (those of intellectual and moral integrity on the right and the left) you have people making good faith arguments. On the other side you have idiots making hyperbolic and blatantly untrue claims, and you have idiots like McArdle “reasonably” justifying those untrue claims with cites to biased research that they themselves do not understand and don’t bother to confirm, while smugly telling us that they are the ones doing the “real” research and fact-checking.

I still respect the Atlantic magazine (what actually gets printed on paper) but that they permit anyone to engage in such nonsense under their banner certain demeans them in my eyes.

Xanthippas

FYI, those last two paragraphs are mine. Blockquote effed that up.

Wile E. Quixote

I think that given the way things are going in this country we need to have a “taxonomy of teh stoopid” to describe the likes of McMegan McArdle and her child bride, McPeter McSuderman.

HyperIon

Tim F. wrote: One can also reach Bloom’s first level by opening the newspaper and reading a paragraph at random.

Just a quibble here.

Certainly one USED to be able to do that but now the quality of reporting is so bad that this “learning” mechanism is largely unavailable.

See Wapo’s “Army Officers Criticize Rebuke of Gen. McChrystal” for an example of multiple instances of reasoning FAIL by the reporter.

HyperIon

@aimai wrote: Its (SIC) cold comfort, I know, but when you have fully grasped that Megan has the intellectual and moral wattage of a creeping form of foot fungus you also grasp, to the full, that there is…how shall I put it?… a moral, political, and intellectual imbalance between our side and theirs.

I have this very thought sometimes although I haven’t ever expressed it in such a nice sentence. However, I try to discourage myself from heading in this direction because my statistics background makes me doubt that folks are the right are morally, politically, and intellectually “backward”.

So I tend to chalk this up to a sampling problem, as you imply in the latter part of your comment. That is, there are many non-idiots on “their side” but they are choosing to remain silent at this time. Understanding WHY they choose to remain silent is probably more important than understanding that the non-silents ones on “their side” are idiots.

My 2 cents.

HyperIon

@burnspbesq wrote: I am not clear as to why we are revisiting the subject of McArdle. Is there new information that casts doubt on the long-standing consensus that she is biased, intellectually dishonest, and generally unworthy of being taken seriously?

Perhaps you are unfamiliar with one of the ‘tubes less endearing obsessions: dead-horse beating. If we stopped beating dead horses on the web, traffic would plummet to almost zero. Echo chambers need echos!

I want one of those Pie filters that blocks all the endless repetitions and “me, too” comments.

Howlin Wolfe

@FMguru: How true that is! That’s why their think tanks should be called “The Institutes of the Foregone Conclusion”.

Xanthippas

Dude, I think that like every day of the week. Specifically, when I get online in the morning and read the very first thing I see by a right-winger.

asiangrrlMN

I read all four parts of the epic smackdown, and it left me wanting more. Yum yum. Kudos to Tom Levenson for systematically dismantling the nonsense of MM2. It’s needed because she gets so much fucking internet-time, for whatever reason. I am still yet to be convinced that she can actually think, but I will concede that thinking may be within her abilities (barely).

Gregory

@Xanthippas:

That McArdle — who has been writing about the things that matter in real people’s lives as if it were a game since at least the runup to the Iraq War, under the pesuedonym “Jane Galt” — is tolerated at all, let alone taken seriously, is the true measure of our toxic media culture.

Anne Laurie

@ericblair:

I so need to find a way to get this into the Lexicon!